One of the most interesting datasets I have is my own Apple Health data, which I’ve always liked playing with. Some years ago, I did share a post analyzing the data using Jupyter Notebook. Today is 2021 and a popular visualization tool with software developers for collecting system metrics is Grafana. It’s an excellent and very flexible piece of software. So I thought, why not try to get my data in there, so that I can do better charts than what Apple’s current apps offer – sum of steps per year, rather than a monthly average in yearly view, etc.

After a few failed attempts over the past month, I finally succeeded to plot my own Apple Health data in Grafana.

I needed a few pieces of software to build the charting pipeline to Grafana:

- InfluxDB (Time-series data store)

- Health Auto Export (iOS App, paid, as of time of writing it’s a one-off $1.99)

- A Python Flask Server converting the Health Auto Export JSON to InfluxDB data format

- Grafana that will chart the data

I set this up on my local network, so that I can open the Grafana UI from Safari on my iPad. Not extraordinarily secure, but enough for a MVP. Secondly, since I run the database and Grafana servers on-demand, the data is inaccessible unless I put some effort to start it all up again. The pipeline is easily reproducible in the cloud with a lambda function and 2 endpoints for InfluxDB and Grafana, but decided to save the cost, since I’ll probably look at this 3-4 times a year.

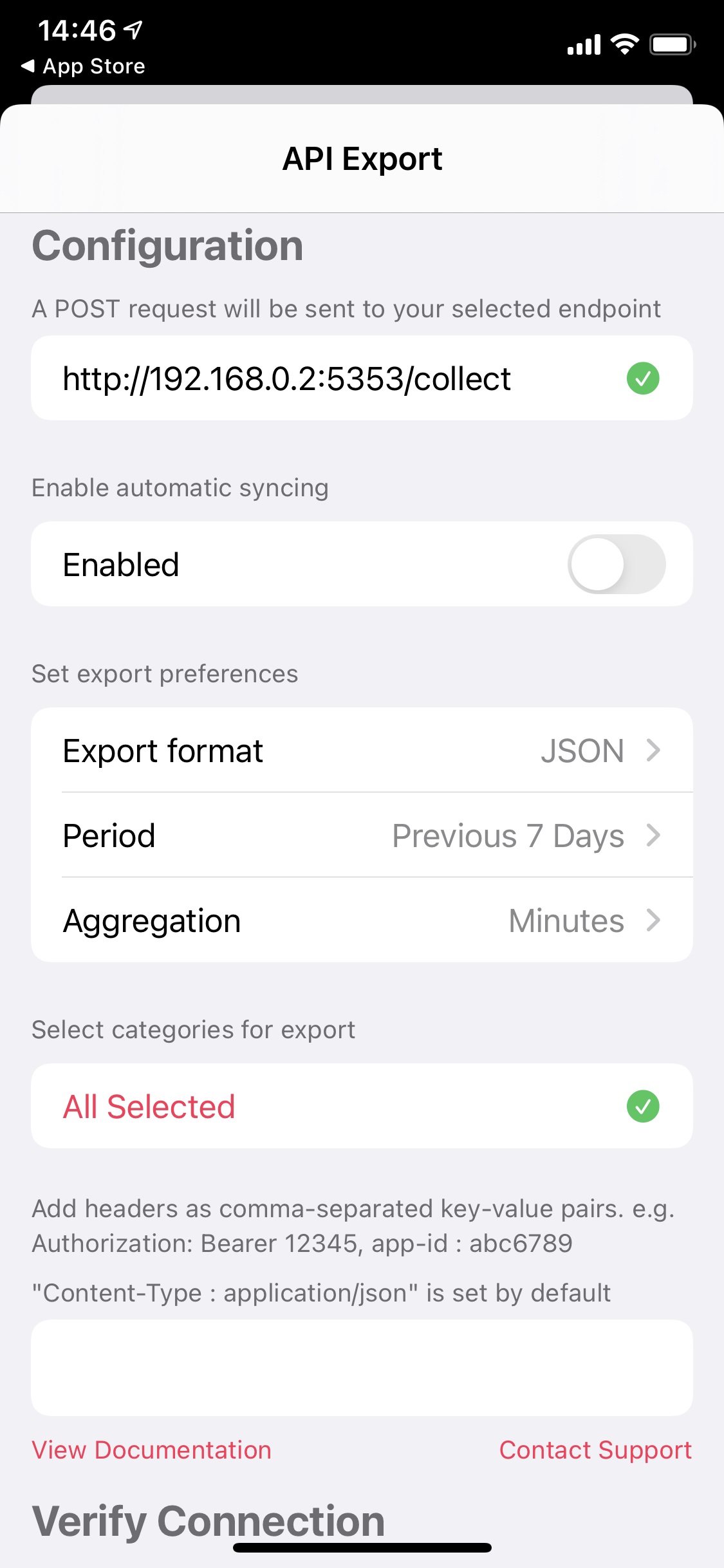

Here’s how the pipeline works. I’m sending my Health data (steps, exercise minutes, etc.) via HTTP POST request from an iOS app called Health Auto Export. It’s the only one on the App Store I found that sends data over HTTP in JSON format. Then I wrote a Python Flask server which collects the POST-ed JSON data and transforms it into the right format to store in the local InfluxDB instance. Then I connect Grafana to the database and voila. It’s really that simple. The most time consuming part was writing the data transformation and having it reusable for any Health metric and to also ingest the workouts geospatial data, so I can plot them on a map. I’ve published it on GitHub. The best part is that in order to update the collected data, I don’t need to redownload the massive Health XML exportable from the Apple Health app every time, when you want to ingest the last 3 days for example, because now I can send only the last 3 days data.

I’m did the setup on a Windows gaming rig, but it would work with any of the other operating systems, since all of the software is cross-platform.

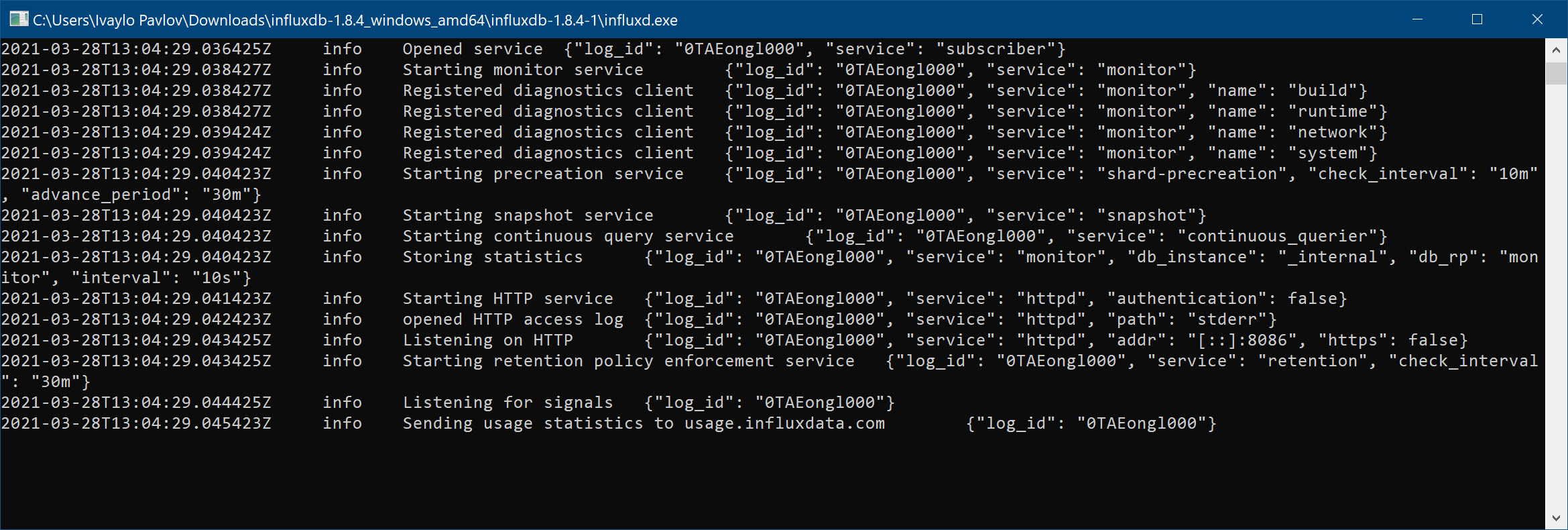

The first thing was the InfluxDB data store, after downloading and extracting the Windows ZIP one, just had to start the influxd.exe on Windows. I went with the older version – 1.8.4 instead of v2, since I’m more familiar with the old SQL-like syntax and didn’t feel like learning the new query language called “Flux”. It looks like this.

InfluxDB does send anonymous usage statistics, which can be disabled by using an environment variable, more details in this GitHub Issue. Since I didn’t store any sensitive data I was not concerned, I left it on. All I thought of it was if they use InfluxDB to collect usage metrics on InfluxDB.

InfluxDB does send anonymous usage statistics, which can be disabled by using an environment variable, more details in this GitHub Issue. Since I didn’t store any sensitive data I was not concerned, I left it on. All I thought of it was if they use InfluxDB to collect usage metrics on InfluxDB.

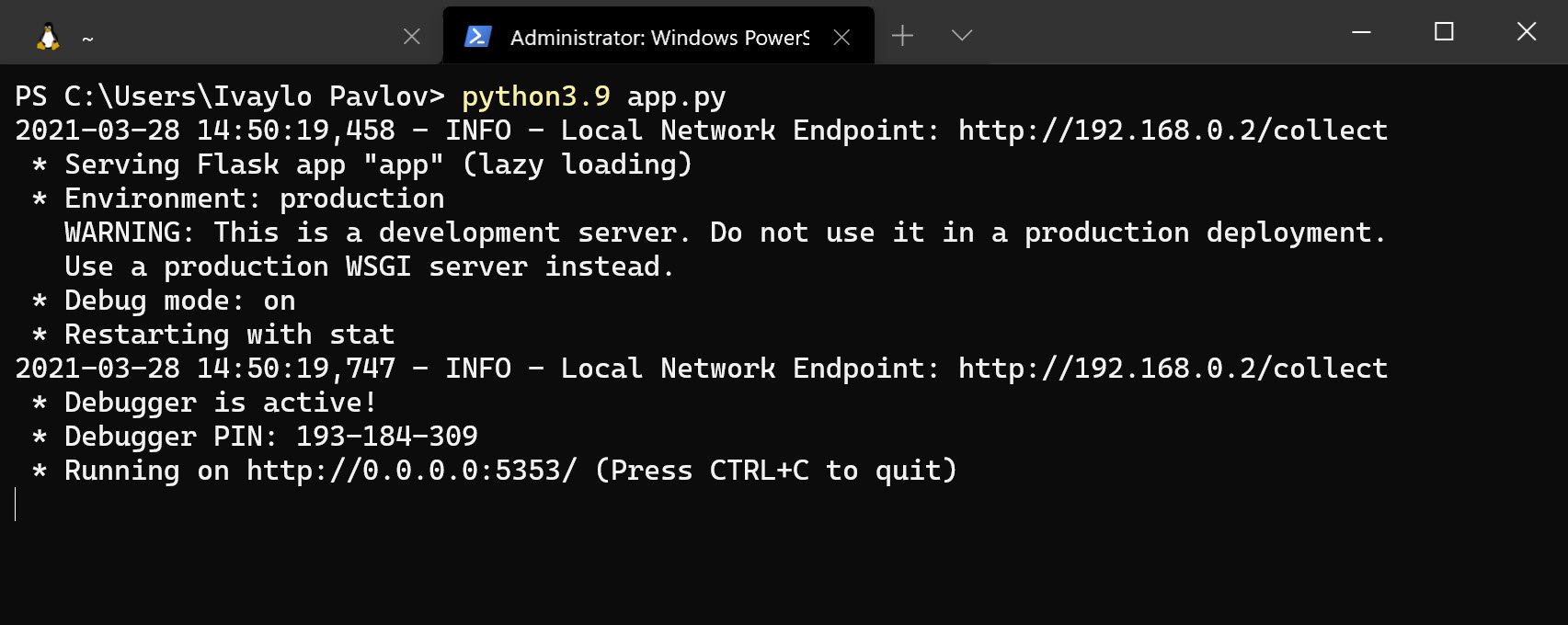

The Python Flask server I wrote, was easily run with python3.9 app.py and I had it print the machine IP on the network, in order to figure out what endpoint to enter in the app to send the data to.

I had exposed port 5353, therefore the entry I had to input was http://192.168.0.2:5353/collect

|

|

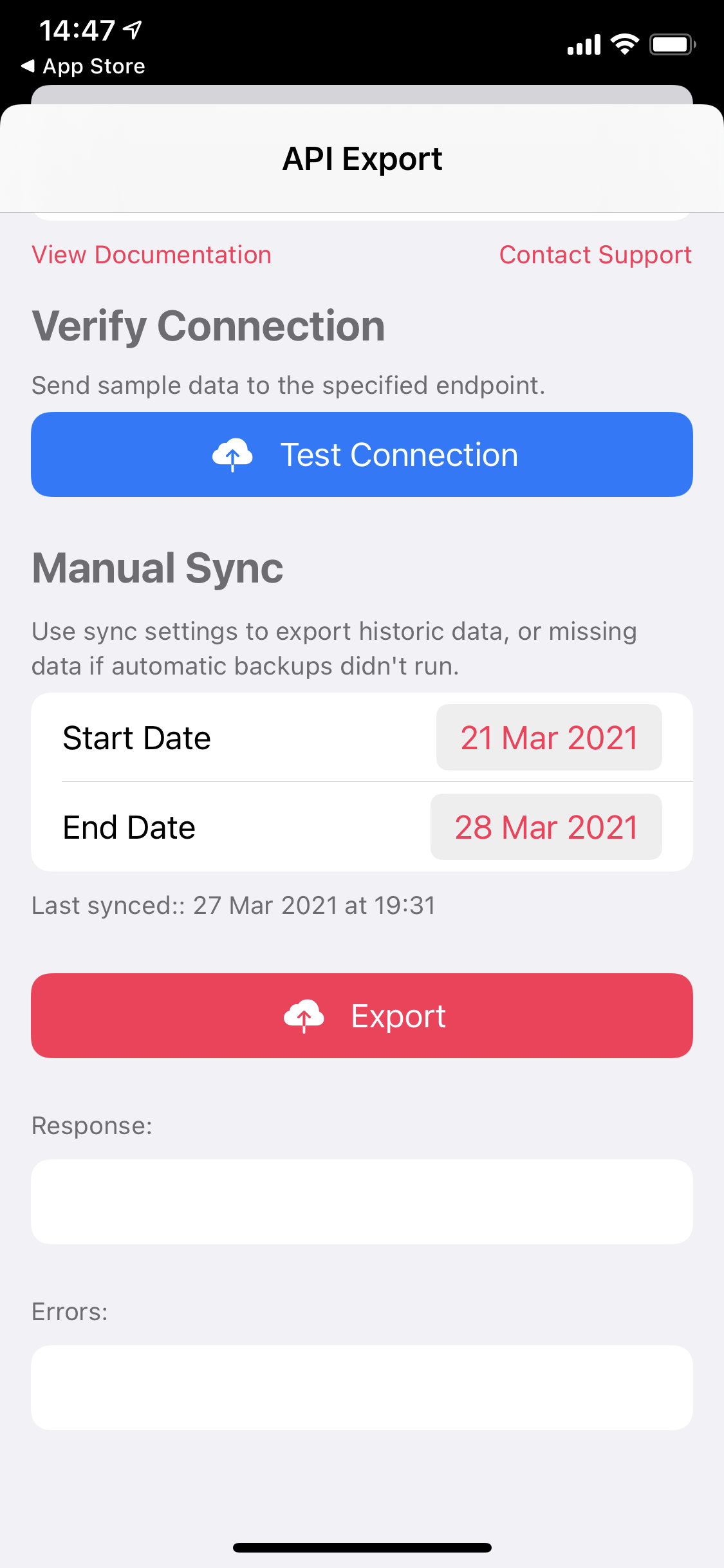

Clicking Export, I had received Success.

If I tried to export all my data in one go (5-6 years), the iOS App crashed, so I had to export about 3-4 months at a time, not a big issue since the entire thing took less than 2-3 minutes each. My iPhone is now 4 years old, so it’s probably not an issue on the newer models because of better CPUs. I had roughly 1mil data points per 4.5 months, so I was happy with the result. Python transformed and InfluxDB ingested them with surprising speed, granted it ran locally.

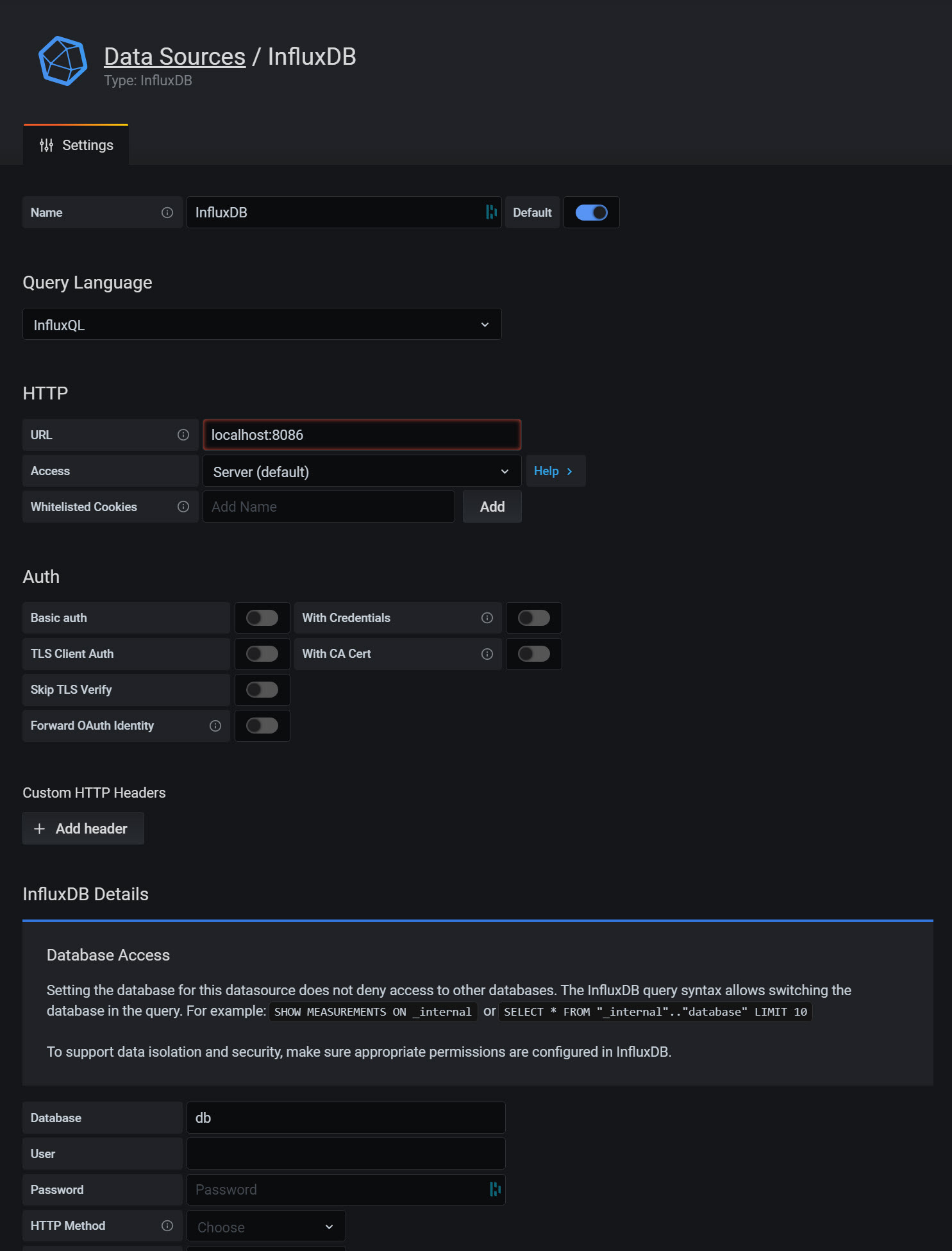

Installing Grafana was just running the downloaded binary from the official website, it did require a PC restart. Upon reboot it was readily available on http://localhost:3000, since it ran as a Windows service. To connect it to the data, I had to go to Settings -> Data Sources -> InfluxDB and just enter the address as http://localhost:8086 and the default database name as db. Since this is what I had set in the ingestion script, then Save & Test and done.

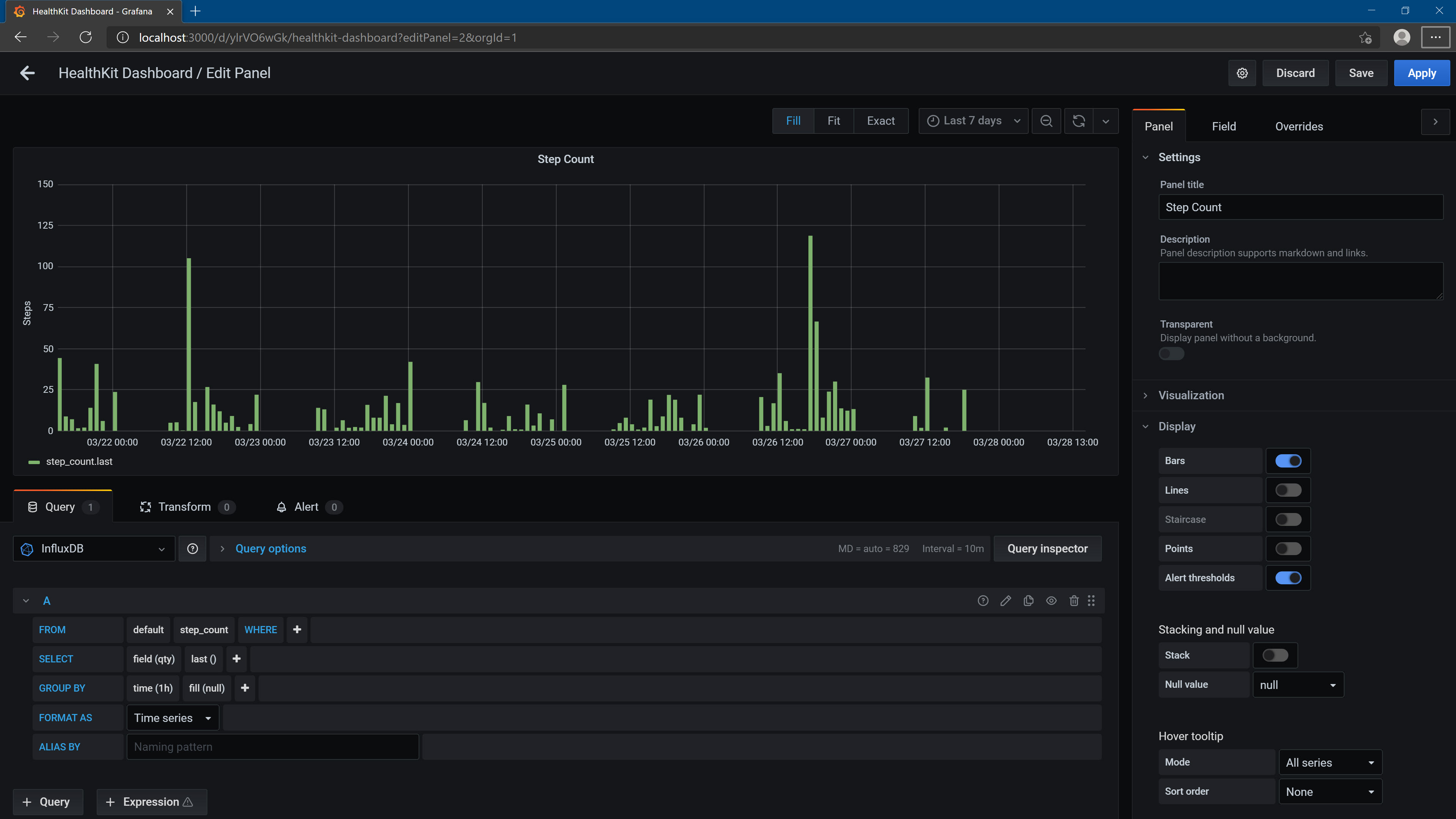

Here are some of the panels in the dashboard I set up.

Here are some of the panels in the dashboard I set up.

If you found the post interesting, please consider sharing. See you next time!

2 Comments

Online Training · 14/06/2021 at 5:20 am

Nice article. Thank you for sharing.

blacked · 16/05/2021 at 2:08 pm

Hi,

thank you very much for that!!!

I make a VM with launch your script as service and it is work perfectly